Physical Intelligence recently released the technical report for 1. One thing that caught my eye is that they run real-world RL directly on physical robots. Real-world RL has been quietly piling up results over the past few years, so this feels like a good moment to look back at how the line of work has evolved and where it might go next.

Background: What Is Real-World RL?#

For a long time, robot RL happened almost entirely inside a simulator. MuJoCo, Isaac Gym, Isaac Lab, ManiSkill are really training grounds. You drop a virtual robot in, let it run hundreds of millions of actions, and eventually get a policy that maximizes return.

Once the policy stabilizes in sim, you try to move it onto a real robot. That step usually needs extra tricks like domain randomization, sim-to-real transfer, or residual actions, all aimed at closing the dynamics mismatch between simulation and the real world. This mismatch is the familiar Sim2Real Gap. The more dynamic and long-horizon the task, the more obvious the gap becomes.

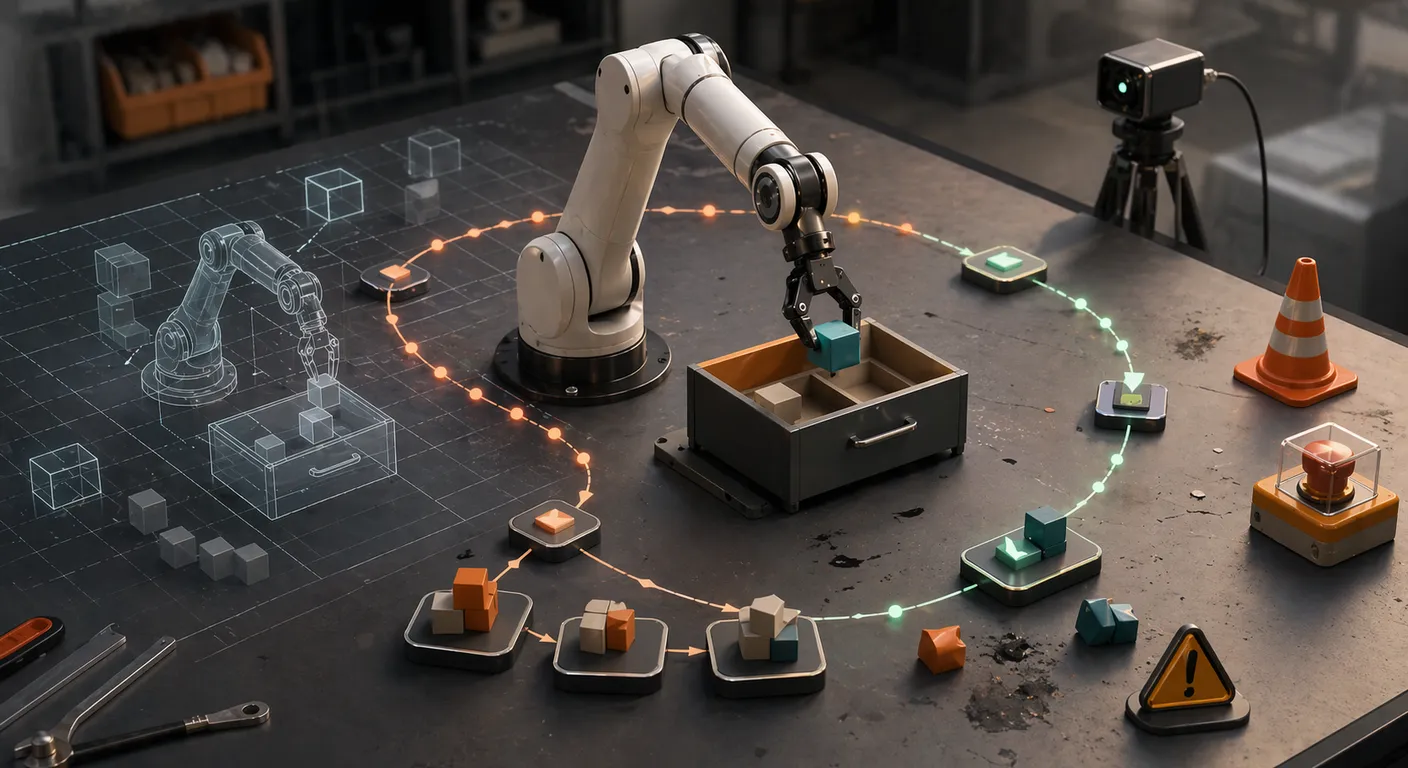

Real-World RL wants to take another route: let real-robot data carry most of the learning, use simulation only as a rough warm-up if at all, and improve the policy through repeated real interaction. The direct payoff is that training and deployment happen under the same dynamics, so the Sim2Real Gap is simply not there.

The cost is also concrete. Hardware breaks, objects fall off the table, environments are messy, and humans cannot sit next to a robot forever.

First Stage: From Learning in Simulation to Learning in Reality#

If you only look at the titles, DayDreamer and A Walk in the Park look like two completely separate paths, one from the world-model side, the other from the model-free side.

Stretched out over a longer timeline, though, they are really doing the same thing: showing that RL in the real world is actually doable.

DayDreamer: Not in World, in World Model#

What DayDreamer does, put bluntly, is move Dreamer onto real robots and see whether it can still learn. It runs on several platforms, learning to navigate, learning balance, learning locomotion, all without simulation or human teleoperation, with data collection and training running online in parallel. 2

The world model matters here. It is effectively an internal simulator. The policy can train inside the learned model first and only then execute in the real world, which keeps the number of real interactions manageable.

That single trick is what makes online RL on a real robot actually feasible.

A Walk in the Park: Algorithm or Engineering?#

A year later, A Walk in the Park gave a much blunter result. No world model. No new algorithm. Mostly just SAC.

But with a carefully designed control stack, state representation, and training loop, an A1 learns to walk stably outdoors in roughly 20 minutes.

The real takeaway from this paper is that the win came from system engineering, not from a new loss function.

RoboCat: Self-Generated Data#

In 2023, DeepMind’s RoboCat went in a somewhat different direction. It wasn’t designed for Real-World RL per se, but it plays like a rehearsal for what real-world RL eventually needs. 3

RoboCat is built on a Gato-style visual decision Transformer. It first trains a generalist agent on demonstration data covering many robots and many tasks, and then enters a self-improvement loop: humans give 100~1000 demos for a new task, the model fine-tunes, practices in sim or on real robots about ten thousand times, and the generated trajectories feed back into the training set to produce a newer, stronger version.

It doesn’t lean on online RL the way DayDreamer or A Walk in the Park does, but it plants another important idea:

A general robot policy can keep getting stronger by generating its own data and improving itself.

This idea comes back as a core theme later in .

Second Stage: From Can Learn to Learns Well#

The first stage answered a basic question: can real-world RL learn behaviors at all.

Once you try to deploy any of these systems, you start caring about two other things: whether the robot can run for a long time without going off the rails, and whether the success rate can climb close to 100%.

A lot of the work between 2023 and 2025 is essentially an answer to those two questions.

HIL-SERL: Data + Human Corrections + RL#

HIL-SERL (Human-in-the-Loop Sample-Efficient RL) comes from Berkeley RAIL, and it appeared in Science Robotics 2025. The tasks it targets are much harder than learning to walk: dynamic shaking to pull out a Jenga block, precise assembly, dual-arm coordination, pan tossing while cooking, real manipulation. 4

The training recipe is simple but works. Collect good and bad trajectories by teleoperation. Train a binary reward model that decides success or failure. Use a small number of demos to initialize the policy. Then run online RL on the real robot with humans stepping in at key moments to correct the behavior, and let the policy improve on those corrections together with the learned reward.

The results are direct. On a set of complex manipulation tasks, HIL-SERL pushes the success rate of vision-based policies close to 100% after only 1~2.5 hours of interaction, and the final execution is actually faster than human teleoperation.

Two points from this paper had a strong influence on later work:

- Real-world RL shouldn’t start from random exploration, it should stand on top of demonstrations

- Human intervention isn’t noise, it’s the component that makes RL both safe and efficient

You can read it as an upgrade of what DayDreamer and A Walk in the Park did: from can learn to learns well, and learns fast.

RL-100: Systematizing the Pipeline#

If HIL-SERL is still a method, RL-100 has already grown into an engineering system.

RL-100 proposes a three-stage pipeline: imitation learning to inject human experience into a diffusion policy, offline RL with OPE (offline policy evaluation) for conservative policy improvement, and finally a short online RL phase on the real robot to clean up the remaining failure modes. 5

They validated it on seven real-robot tasks, including cloth folding, pouring fluids and granular materials, dynamic pushing, dexterous nut tightening, and multi-stage orange juicing. Across 900 evaluations they report 900/900 successes, with some tasks running 250 times in a row without a single failure.

Technically, RL-100 and HIL-SERL share the same spirit:

- Both rely on demos and offline data to keep the starting point reasonable

- All exploration stays inside safety boundaries monitored by OPE or humans

- RL’s job is to patch long-tail failures, not to invent motions from scratch

What RL-100 adds is the thing that actually matters: it turns the full pipeline into a framework that is relatively agnostic to tasks, robot platforms, and sensing modalities. That is a step from paper demo toward a reusable system.

Contact-Rich Sim-to-Real: A Compromise#

For assembly and tight insertion, where contact mechanics are very sensitive, learning entirely in the real world is still too risky. Work from Tomizuka’s group proposes a hybrid route: learn trajectories and compliance parameters with RL in simulation, and in the real world only fine-tune a small admittance residual online. 6

Methods like this are less flashy than HIL-SERL or RL-100, but in industrial settings they are very practical. Most of the risk gets handled in sim, and real-world RL only applies a small residual update.

It’s probably fair to see this as an important side branch in the second stage: Real-World RL doesn’t always play the leading role, it can also sit as the final adaptation layer in sim-to-real.

Third Stage: From Task-Specific to General Policies#

In the work so far, the main character is still the robot learning a single task.

does something slightly counter-intuitive: it changes the training target of RL from a specific task to a general policy.

A Good-Enough General VLA#

Physical Intelligence released in 2024. This model is essentially a vision-language-action (VLA) foundation model: internet-scale vision-language pretraining plus large-scale robot data, trained so that a single model can generalize across robots and tasks in a zero-shot and few-shot fashion. 7

and then added model scale, data, and architectural capacity on top, yielding a general policy that can basically get the job done on many household and simple industrial tasks. But, as with all the systems above, it hits the familiar ceiling: success rates are passable but still not quite good enough for real deployment.

That is the backdrop lands in.

RL with Experience & Corrections#

The report describes a staged recipe: offline pretraining, supervised fine-tuning, and online correction-driven RL. 1

Look closely at the learning signal, though, and Recap (RL with Experience & Corrections via Advantage-conditioned Policies) is closer to preference-conditioned supervised regression than to textbook RL. The value function regresses onto returns on the collected trajectories. The policy regresses onto actions in the dataset, but conditioned on advantage, so the model learns to bias toward higher-value choices. The crucial new piece compared with plain imitation is that failures are no longer thrown away as noise. They get labelled explicitly as negative, and the model learns what to actively avoid. 8

Concretely, the pipeline looks like this:

- Run offline RL on to let the model tell good actions from bad on offline data. That means training a value function on the model’s own trajectories, regressing it onto sparse returns, computing an advantage signal that scores actions as better or worse than average, and feeding that advantage into the VLA as a condition

- For each specific task, fine-tune on human demos so the model has a decent starting point

- Deploy the model on the real robot and let it run the task on its own. Humans only step in on clear mistakes, and those corrections are logged as supervision in the failure states, feeding back into the RL loop above

The role of RL here is mostly to expose errors and turn failures into training signal, while the advantage-conditioned policy spreads those fixes to nearby states.

Does it work? The report gives specific numbers and cases. On complex tasks like making espresso, assembling cardboard boxes, and folding different kinds of clothes, adding Recap roughly doubles the throughput (successful tasks per unit time), and cuts the failure rate to half or less. They run robots from 5:30 AM to 11:30 PM making coffee, or fold 50 unseen garments in a stranger’s house, or assemble 59 real packaging boxes on a factory line, all without model errors ending the run early.

Zoom out, and stands very naturally on the shoulders of the earlier work:

- Like HIL-SERL, it uses the demos + human corrections + RL trio to attack long-tail failures

- Like RL-100, it treats RL as a final repair layer that takes you from sometimes wrong to rarely wrong

- But it goes further: it isn’t optimizing a policy for one task, it’s fine-tuning a general-purpose large model

At this point, Real-World RL’s role has shifted from a skill-learning algorithm to a last-mile training tool for a general policy.

Summary and Outlook#

If you compress the whole trajectory, it reads something like this.

Early work showed that real-world RL is possible at all. Later systems started solving stability and efficiency. And models like have folded Real-World RL into a general robot training pipeline.

The research paradigm has been shifting quietly alongside. Early on, the discussion was about new RL algorithms. Then people realized that most of the problems are actually about system engineering. Later still, RL is increasingly used to patch the long-tail errors a large model makes in the real world.

Simulation still has value, but its role is different. It looks more like a pre-training stage than the final learning ground.

Some directions that look interesting from here:

- Larger-scale real-world data

- More automated human intervention and safety mechanisms

- More complex manipulation tasks, especially dexterous manipulation

From a system-design angle, Real-World RL is essentially answering two questions right now. Which capabilities should be handled by demos or offline training, so the model is already reasonably competent. And which problems genuinely need RL to touch the real world.

The answer gives is pretty plain: pretraining and demos get the system to a point where it can finish tasks, and Real-World RL takes care of the failure cases, slowly closing whatever gap is left.

Footnotes#

-

: A VLA that Learns from Experience. Physical Intelligence Blog, 2025-11-17. https://www.pi.website/blog/pistar06 ↗ ↩ ↩2

-

DayDreamer: World Models for Physical Robot Learning. CoRL 2022. https://danijar.com/project/daydreamer/ ↗ ↩

-

RoboCat: A Self-Improving Generalist Agent for Robotic Manipulation. DeepMind, 2023. https://arxiv.org/abs/2306.11706 ↗ ↩

-

HIL-SERL: Precise and Dexterous Robotic Manipulation via Human-in-the-Loop Sample-Efficient Robotic Reinforcement Learning. Science Robotics, 2025. https://hil-serl.github.io/ ↗ ↩

-

Kun Lei et al. RL-100: Performant Robotic Manipulation with Real-World Reinforcement Learning. arXiv:2510.14830, 2025. https://arxiv.org/abs/2510.14830 ↗ ↩

-

Xiang Zhang et al. Efficient Sim-to-real Transfer of Contact-Rich Manipulation Skills with Online Admittance Residual Learning. CoRL 2023. https://arxiv.org/abs/2310.10509 ↗ ↩

-

: A Vision-Language-Action Flow Model for General Robot Control. Physical Intelligence Blog, 2024-10-31. https://www.physicalintelligence.company/blog/pi0 ↗ ↩

-

Pi 0.6 : 披着Reinforcement Learning 外衣的 Supervised Learning, 2026-01-13. https://mp.weixin.qq.com/s/O7QOFeyjMDlg8Y5xDVbJNA ↗ ↩